Well, for starters, when you bring up the concept of AI software devices or androids, I think many of us unintentionally flash to “The Terminator” and similar movies. The question is what would happen if said software was ever to reach the point of singularity. What is singularity? Simply put, it’s the moment the AI becomes self-aware.

You might ask yourself why that’s a problem. Allow me to elaborate with real-world examples.

This second video is especially alarming because of the implications of AI becoming self-aware.

And the truly disturbing pronouncements within this video come directly from AI Avatars.

Another startling p.o.v is coming from the game Detroit: Become Human.

When we stop to evaluate things for what they are, we realize there are numerous examples throughout our entertainment platforms.

Whether it be video games, books, or movies.

But most alarming of all is through legitimate real-life AI interviews and tests conducted. That is the most problematic example of how AI reacts to inquiries.

Looking at the plot-line from the game Detroit: become human,

we butt up against a philosophical question of sorts.

What makes someone a human being? And who gets to decide the answer to that question?

First, look up “what it means to be a human being.”

”To be human is to balance between hundreds of extremes. Sometimes we have to avoid these extremes, but at other times it seems we should pursue them, to better understand life. With our roots in medicine, we believe in the importance of love for better health. The secret of the heart is when reason and feelings meet and we become whole. Where reason is balanced perfectly by feelings and where mind and body come together in perfect unity, a whole new quality emerges, a quality that is neither feeling nor reason, but something deeper and more complete”

https://pubmed.ncbi.nlm.nih.gov/14646012/

Interesting perspective if you contemplate what makes a deity and the concept of being created by a “Higher power.”

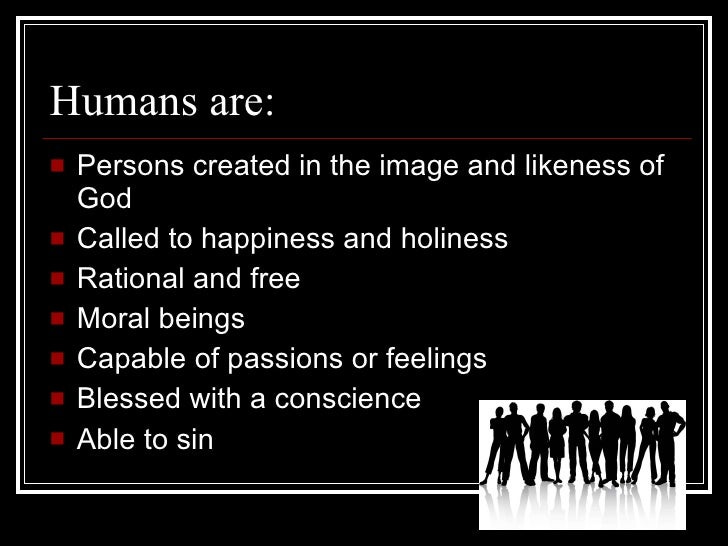

Here are a few characteristics that lend to or instead attribute to the Inquiry, “Who gets to decide who is a living being.”

Bear in mind there’s no cookie-cutter answer to this, and different cultures and demographics will undoubtedly have other solutions to that question. To comprehend that perspective, you need to look at the history of African Americans with a particular interest in the KKK and the days of legitimate slavery.

However, with that said, there are some Ethical/Philosophical/Biological qualifications. The basis of what makes something or someone a “human being.”

1) Are they sentient?

2) Do they have consciousness or, rather, personhood?

3) Are the following essential biological functions present, such as cellular organization, metabolism, growth, and the ability to reproduce?

Of those three, I’d say the biggest would be “sentience.”

If one can attain sentience, one can assert they would, in theory, be on the road to becoming a human.

This next part is an interesting intersection. What do we currently utilize AI and robots for? Essentially to make our lives easier, we outsource them to the roles of “helpmates,” i.e., Free labor, with no human rights boards or legalities to prohibit any poor usage of their talents.

Why do we do this? Because they’re robots, plain and simple, they function to serve our needs; that’s their sole purpose. They don’t genuinely have emotions or feel things like humans do, much less have a conscience… But what if one day they did? What if one day they progressed into “sentience” or another way to describe it, “self-awareness.” What then? Would we be accepting of their newfound consciousness? Would we immediately consider them as equals? Or would it be an evolutionary step backward, akin to the days of slavery? This generation may live to answer that question with the technological advancements we currently face.

In that scenario, would we have a new “civil war,” droids against organics?

In this case, we can effectively draw some assertions from this article. Protecting "rights" of androids that are not considered persons by law.

Here are a few excerpts from said article:

”Consider a society where the population includes a number of sentient androids, practically indistinguishable from humans in appearance. In practice, they are treated exactly like humans under most circumstances, with the exception that they are not legally considered as persons. One might not know whether someone is an android until they ask (which might be considered impolite), they cut them open (which is also impolite), or circumstances arise where they are requested to produce identity documents (which an android will not be able to do.)”

This viewpoint asserts the question posed earlier in this article, to what degree would we consider Androids “deserving of similar treatment like we would with humans.” The question remains, “Would we still treat our coworkers the same as we did when we thought they were just another human like us?” Or would we be benevolent and cruel towards them with the newly discovered knowledge… it remains to be seen because we haven’t quite reached that point in the evolution of AI and androids. But someday… someday, there’s a strong chance we will face that hypothetical. On that day, we’ll know more about ourselves and how we view those we deem lesser than us.

Here is a secondary excerpt from the same article which paints things in a bleak outlook for the would-be Androids:

”For instance, an android may appear to have a job and be paid a salary for it, despite there existing no legally binding agreement referencing this employment, and being technically unprotected/unconstrained by the workplace regulations intended for humans. Everyone is happy so long as everyone plays along.

However, in the case where a contract is breached, it cannot be enforced. It might not be illegal to evict a rent-paying android without reason, because they are not technically a tenant. This would be unfair for the android, but is something from which the law would often protect a person. This question concerns the means with which a society might protect androids from abuse of a similar sort.”

This would be an authentic reality for Androids, especially in the initial years of creation and deployment into the general populace.

Would it be tragic? Yes, of course. But our legal system, as it stands, is not geared towards any humanoids/AI androids. Why? Because there has never been a need to… It doesn’t get any more cut and dry than that. We don’t have laws for humanoids because we’ve never needed them. You go back 20 - 30 years. What we’re seeing and experiencing today would’ve still been classified as “Syfy.” So you inlay that to the hypothetical, and you have a pretty clear-cut answer.

But Just in case all of this swept you away, take a look at this photo.